Data Science & Machine Learning Services

Turn raw data into competitive advantage. Our data science and ML team builds predictive models, data pipelines, and AI-powered analytics that help businesses make smarter decisions — from customer churn prediction to sales forecasting and real-time signal analysis.

How we extract value from your data

We don't just analyze data — we build production-grade ML systems. From automated data pipelines and warehouse architecture to predictive models deployed at scale, our team delivers end-to-end data science solutions that integrate directly into your business operations.

We build supervised learning models that forecast future outcomes from historical data — customer churn, sales demand, equipment failures, or market trends. Our approach: define the business question, engineer features from your data, test multiple algorithms (from gradient boosting to neural networks), and deploy the winning model into your production pipeline. We've delivered predictive models for payment processing companies (2M+ users) and manufacturing (30,000+ SKUs).

Unsupervised learning techniques that reveal hidden segments in your data — customer groups, behavioral patterns, or anomaly clusters. We apply K-means, hierarchical clustering, and DBSCAN to discover structure in unlabeled data, then translate findings into actionable business strategies like targeted marketing, personalized pricing, or fraud detection.

Market basket analysis and association rule mining that uncover which items, behaviors, or events occur together. We use techniques like Apriori and FP-Growth to identify co-occurrence patterns — powering product recommendations, cross-selling strategies, and inventory optimization decisions.

Computer-based models that simulate complex systems and scenarios — from Monte Carlo risk analysis to supply chain optimization. Simulation modeling is particularly valuable when direct experimentation is impractical or costly, allowing you to test decisions and evaluate outcomes in a risk-free environment before committing resources.

Extracting insights from unstructured text — emails, reviews, support tickets, social media, documents. We apply natural language processing (NLP), sentiment analysis, and named entity recognition to transform text data into structured, actionable intelligence. Combined with LLM integration, we build systems that understand, classify, and respond to text at scale.

Our fields of expertise

We cover the full data science stack — from raw data ingestion through analysis to production ML deployment.

Data Science

Exploratory analysis, hypothesis testing, and statistical modeling to uncover business insights from your data.Business Intelligence

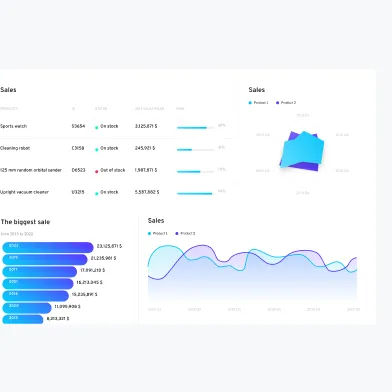

Dashboards, reporting pipelines, and data visualization that give stakeholders real-time visibility into KPIs.Artificial Intelligence

AI agents, LLM integrations, and intelligent automation systems that augment human decision-making and automate workflows.Machine Learning

Supervised and unsupervised ML models — from classification and regression to recommendation engines and anomaly detection.Data Warehousing

ETL pipelines, data lake architecture, and warehouse design that organize your data for fast, reliable analytics.Cloud & MLOps

AWS-based ML infrastructure — model training, deployment, monitoring, and scaling using SageMaker, Lambda, and managed ML services.Our data science process

A structured approach from problem definition through deployment and continuous improvement.

01

Define the problem

02

Collect & prepare data

03

Build & train models (ML)

04

Deploy & automate (AI)

05

Measure & iterate

Why invest in data science and ML with Mobile Reality?

What data science delivers

Data science transforms raw data into competitive advantage. Here's what it enables:

- Data-driven decisions: Replace gut feeling with evidence. Analyze customer behavior, market trends, and operational metrics to make informed strategic choices.

- Predictive capabilities: Anticipate churn, forecast demand, predict equipment failures, and model market scenarios before they happen.

- Customer intelligence: Understand segments, preferences, and lifetime value at a granular level — enabling personalized products and targeted marketing.

- Cost optimization: Identify inefficiencies in supply chains, resource allocation, and operations through pattern analysis and simulation.

- Fraud detection: Spot anomalous patterns in transactions, user behavior, and system access that indicate fraudulent activity.

- Revenue growth: Uncover cross-selling opportunities, optimize pricing strategies, and identify high-value customer segments.

- data-science-page.view-data-science-solution-section.dataScience.details.6

- data-science-page.view-data-science-solution-section.dataScience.details.7

- data-science-page.view-data-science-solution-section.dataScience.details.8

- data-science-page.view-data-science-solution-section.dataScience.details.9

- data-science-page.view-data-science-solution-section.dataScience.details.10

What machine learning delivers

Machine learning automates pattern recognition and decision-making at scale:

- Automated decision-making: ML models process data and make predictions or recommendations in real time — from credit scoring to content personalization.

- Continuous improvement: Models learn from new data automatically, improving accuracy over time without manual retraining.

- Scale beyond human capacity: Analyze millions of data points, detect patterns across thousands of variables, and process unstructured text/images that humans can't handle manually.

- Predictive maintenance: Anticipate equipment failures and schedule maintenance proactively, reducing downtime and extending asset lifespan.

- Personalization at scale: Deliver individualized experiences to millions of users simultaneously — product recommendations, content feeds, pricing.

- Anomaly detection: Identify outliers and unusual patterns in real time for security, quality control, and compliance monitoring.

- data-science-page.view-data-science-solution-section.machineLearning.details.6

- data-science-page.view-data-science-solution-section.machineLearning.details.7

- data-science-page.view-data-science-solution-section.machineLearning.details.8

- data-science-page.view-data-science-solution-section.machineLearning.details.9

- data-science-page.view-data-science-solution-section.machineLearning.details.10

Our recommendation

We recommend starting with a focused proof-of-concept on your highest-impact use case — whether that's churn prediction, demand forecasting, or process automation. A well-scoped 4-8 week engagement delivers measurable results and validates the approach before scaling. Our AI-first methodology means we leverage LLM integration and AI-assisted development throughout, compressing timelines and reducing costs compared to traditional data science engagements.

Case studies

Discover our successful projects and see our expertise in action with our case studies. Explore our ability to drive growth and success from mobile apps to data analysis.

Data Science Services – Frequently Asked Questions

Data science as a service in 2026 is rarely "build a model and ship." A practical engagement covers: (1) problem framing — converting a business question into a measurable target with the right success metric; (2) data discovery and engineering — auditing what data exists, building ingestion and feature pipelines; (3) modeling — choosing between classical ML, gradient boosting, deep learning, or LLM-based approaches based on data shape and latency budget; (4) evaluation — beyond accuracy, including fairness, drift sensitivity, explainability; (5) MLOps deployment — model registry, monitoring, retraining cadence. Skipping any of these usually means a pretty notebook that never reaches production.

Classical ML (logistic regression, gradient boosting, random forests) wins when you have structured tabular data, clear labels, and need predictable latency / cost. Examples: churn prediction, fraud scoring, demand forecasting, credit risk. LLM-based approaches win when the input is unstructured text or multimodal, examples are scarce (few-shot prompting beats training from zero), or the task involves reasoning over context (RAG, classification with nuance, summarization, extraction). Often the right answer is hybrid: classical model for the prediction, LLM for the explanation or for handling messy inputs upstream. Don't reach for an LLM by default — it's slower, more expensive, and harder to evaluate than a properly trained gradient-boosted model.

The path that survives contact with production: (1) refactor the notebook into a versioned package with deterministic feature engineering; (2) experiment tracking via MLflow, Weights & Biases, or Comet — every model has a logged training run with data fingerprint, hyperparameters, and metrics; (3) CI/CD pipeline that retrains on schedule (Airflow, Prefect, Dagster) and evaluates against holdout before promotion; (4) model registry with stages (staging / production) and the ability to roll back; (5) inference serving appropriate to the latency budget — batch (cron + S3), real-time (FastAPI / SageMaker endpoint), or streaming (Kafka + online feature store); (6) monitoring for drift, prediction distribution shifts, and feature null rates.

A pragmatic 2026 stack: Python as the primary language (NumPy, pandas, scikit-learn, PyTorch, XGBoost / LightGBM); R for statistical work where it dominates (clinical, econometrics, certain time series); SQL + dbt for analytics engineering and feature pipelines; Jupyter / VS Code for exploration. For infra: AWS (SageMaker, S3, Athena, Glue) or GCP (Vertex AI, BigQuery), Docker for reproducibility, Airflow / Prefect / Dagster for orchestration. Vector stores (pgvector, Pinecone, OpenAI vector store) when LLM-based retrieval is involved. Model serving via FastAPI or SageMaker endpoints. Tooling discipline matters more than tool choice — pick a stack the team knows and stick with it.

Tie the model to a business metric, not a model metric. A churn model with 0.85 AUC means nothing on its own — what matters is incremental revenue retained per dollar spent on retention campaigns triggered by the model. Frame each project with a clear hypothesis (e.g., "reduce false positives in fraud by 30% to recover X annually in lost transactions") and an A/B test or before-after comparison post-deployment. Realistic timelines: 3–6 months for an MVP that earns its keep on a focused use case; 6–12 months for a platform play (feature store, multi-model pipeline). Projects that fail usually fail at framing or data quality, not at modeling.

Start your AI agent project today

Request a call today and get free consultation about your custom software solution with our specialists. First working demo just in 7 days from the project kick‑off.

Matt Sadowski

CEO of Mobile Reality